People are usually excited to play with the tools they have around, so was I. I am doing sports with different Garmin sport devices for multiple years and always was curious what you could find if you apply forensics tools on the device. So it might be interesting to forensicate your Garmin Watch, Bike computer or other Garmin devices. This blog post will go over some opportunities and how to do it. And what I learned from it.

If you are curious why you might use something like that, have a look at a recent entry from dcrainmaker.

Take an image

The first thing is always can we take a forensic image. For this the watch needs to be detected as a Mass Storage device (it is recommended to mount as read only!). For my testing I used a GARMIN Forerunner 45S. Once you attach it via USB, it will show up:

$ diskutil list

/dev/disk2 (external, physical):

#: TYPE NAME SIZE IDENTIFIER

0: GARMIN *10.3 MB disk2$ diskutil unmountDisk /dev/disk2

Unmount of all volumes on disk2 was successfulWe can now take a DD image (E01 would be even better, but for this articles purpose, I did not bother to do that, if you find yourself in a situation, where your evidence might be used in court etc, please use a write-blocker and have the image taking process tested and verified!):

$ sudo dd if=/dev/disk2 of=garmin_backup.img.dd bs=512

20066+0 records in

20066+0 records out

10273792 bytes transferred in 23.258457 secs (441723 bytes/sec)Now we have a dd image of the watch and can continue investigating it without messing with the device itself. It is safe to unplug the device now.

The device I tested was a FAT16 file system. If you want to learn more about forensic image taking, have a look at https://www.cyberciti.biz/faq/how-to-create-disk-image-on-mac-os-x-with-dd-command/

Run Plaso on the file

First attempt is to just run the image using Plaso (Plaso Langar Að Safna Öllu) known for “super timeline all the things” to get a timeline. The easiest way is to use the docker version of Plaso:

docker run -v ./scripts/garmin/:/data log2timeline/plaso log2timeline --partition all /data/garmin_backup.img.dd.plaso /data/garmin_backup.img.ddThe resulting plaso file can then be e.g. uploaded to your Timesketch instance directly and will mostly show file related attributes / events, but still a good starting point.

Import Plaso file in Timesketch

The interesting files are in the Activities folder. But besides that we can see certain “expected” file related timestamps that Plaso can extract. To learn more about Timesketch, visit https://timesketch.org

So let’s continue and try to find out more about the activity fit file.

.fit file

File format

There is a great description of the file format available at https://www.pinns.co.uk/osm/fit-for-dummies.html so no need to further investigate at the moment.

How to parse .fit files

Fortunately, there is already a MIT licensed project on github:

https://github.com/dtcooper/python-fitparse with a lot of different test files to play with.

Using the mentioned above python library we can parse the file. What we are interested in is for sure the timestamp of the entry.

Depending on the event frequency there might be a lot of files in there.

After a bit I was successful putting every message in a pandas Dataframe, and also noticed that it might be interesting to keep the device metadata as well.

Here is my code (I ran it in a colab notebook):

def process_file(fitfile,filename):

# Iterate over all messages of type "record"

# (other types include "device_info", "file_creator", "event", etc)

all_entries = []

headers = []

device_sting = ""

for device in fitfile.get_messages("device_info"):

#print(device.get_values())

#device_sting = " ".join((device_sting, str(device.get_values())))

all_entries.append(device.get_values())

for record in fitfile.get_messages("record"):

recordt_row = []

entry = record.get_values()

#if 'device_info' not in entry.keys():

# entry['device_info'] = device_sting

recordt_row.append(record.get_values())

#entry.append("device_info")

#print(entry)

# Records can contain multiple pieces of data (ex: timestamp, latitude, longitude, etc)

all_entries.append(entry)

df = pd.DataFrame(all_entries)

if 'timestamp' in df:

df['datetime'] = pd.to_datetime(df['timestamp'])

else:

return

return df

With this you return a pandas dataframe that then can be easily imported into Timesketch for further analysis.

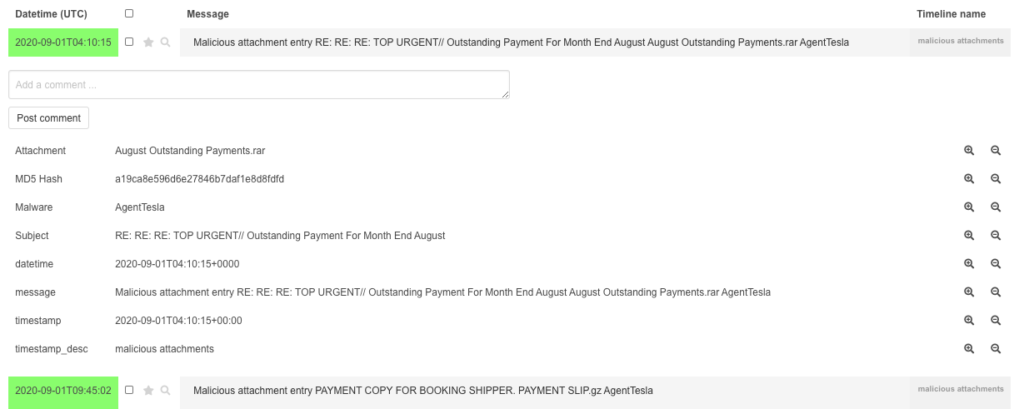

Watch it in Timesketch

For testing purposes I used the following .FIT file: https://github.com/dtcooper/python-fitparse/blob/master/tests/files/2019-02-17-062644-ELEMNT-297E-195-0.fit

And in Timesketch it looks like:

You also can look for Serial number of the Garmin Device:

Pretty cool!

Analyse your data with pandas

Once the data is in Timesketch, you can use sketch.explore to get the data back. With the following command:

sketch = ts_client.get_sketch(2)

cur_df = sketch.explore(

'speed:*',

as_pandas=True,

return_fields='datetime,speed'

)You can do some fun:

cur_df['speed'].plot(linewidth=0.5);To analyse the speed of your files:

Or with:

sketch = ts_client.get_sketch(2)

cur_df = sketch.explore(

'position_lat:*',

as_pandas=True,

return_fields='position_lat,position_long'

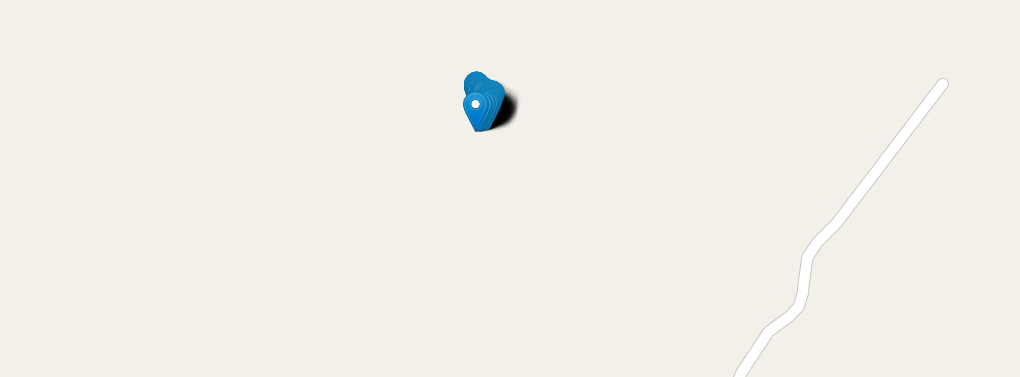

)Return all entries with GPS coordinates. With some extra packages:

!pip install -q folium

import foliumAnd the following code:

cur_df['lat'] = cur_df['position_lat'] /100000000 # hacky way, for some reason the coordinates where stored weird

cur_df['lon'] = cur_df['position_long']/100000000

cur_df.info()

cur_df

map1 = folium.Map(location=(49.850, 8.2462), zoom_start=3)

for index,row in df_new.iterrows():

# Add the geocoded locations to the map

folium.Marker(location=(row['lat'],row['lon']),popup='bla').add_to(map1)

display(map1)You can actually plot a map with the coordinates:

And many more is possible. Hope you liked this article. The notebook is also available on Github. The idea is here was not to examine everything in detail, but give a foundation for further work on how to get the data in and get started.